5 Steps to Data Reliability

Are there trust roadblocks that have come between you and your data?

By rebuilding trust and reliability in your data, you can overcome internal objections and drive a cultural shift for more accurate data-driven decisions. We figure it makes sense to demonstrate the value of data transparency, timely analysis, and data visualization before convincing everyone at your company that they’re a good idea.

At LoadSpring, we have a 5-step plan to achieving data reliability:

- Start a transparent data process.

- Implement timely analysis (to find trends).

- Utilize data visualizations (to convey findings & implement change).

- Get everyone on the same page.

- Encourage team collaboration.

Let’s examine each of these steps in depth, exploring specific ways they help contribute to building data reliability.

Step 1: Start a Transparent Data Process

Instilling a transparent data process is in your company’s best interest. But how does that look in real life?

- Checks and balances: create checks and balances integrated into your data process and be sure to collaborate on data checks.

- Data hygiene: set a standard for acceptable and non-acceptable data and set a system that can remove data that’s not insightful.

- Data policy: create data policies that align with your company mission and purpose to guide your people and process. Make sure you’re proactively sharing your policies and processes versus burying details in the fine print.

- Data disclosure: disclose how data is collected and shared, including naming third-party data sources and partners. Provide clear communications on how customers, consumers, and users benefit from sharing their data.

- Data privacy: use data in aggregate rather than only at the individual level

- Accountability: form a cross-functional team that ensures legal and industry compliance. It’s important to proactively monitor and prepare for new data regulations and privacy laws.

Step 2: Implement Timely Analysis

Timely analysis to find trends must no longer rely on historical data alone since BI tools should use clean data. Kunal Agarwal, cofounder and CEO of Unravel Data, agrees, stating, “While most data quality metrics focus on accuracy, completeness, consistency, and integrity, another data quality metric that every data ops team should think about prioritizing is data timeliness.”

Best practices for data collection include data visualizations and real-time dashboards for performance monitoring and collaboration related to timely data. Companies that place efficiency and time savings front and center prioritize curated data collection and analysis to save valuable time and gain worthwhile business insights.

Step 3: Utilize Data Visualization

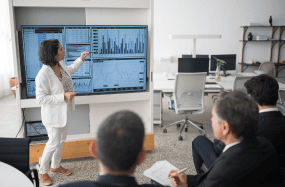

Simple visualizations to convey findings to implement change quickly. Holly Lyke-Ho-Gland recently wrote about how visual data imagery allows organizations to thrive, citing three overarching reasons for implementing visualization tools: a single source of truth, usability, and timeliness. Lyke-Ho-Gland also notes some standard implementation challenges some organizations face: (lack of) training; flexible design; inconsistency; and garbage in, garbage out.

Despite foreseen obstacles, there are several advantages to using good data visualization techniques: first, humans can process visual images better than text, so data visualizations enable viewers to remember information for a more extended time; second, visualizations allow presenters to narrate stories while helping viewers understand data and draw conclusions from it; lastly, viewers can recognize emerging trends, correlated patterns, and parameters, accelerating response time according to what is seen and assimilated.

Several data visualization techniques are recommended for qualitative research: word clouds, graphic timelines, icons beside descriptions, heat maps, and mind maps. But how is trust established in information visualizations? According to recent research, factors that influence the trustworthiness of information include its accuracy, currency, coverage, objectivity, and validity or predictability.

To give you a more definite sense of real-world effectiveness, research by Stanford University’s Robert Horn indicates several benefits of using visual aids to accompany verbal communication:

- 64% of participants made an immediate decision following presentations that used an overview map.

- The use of visual language is proven to increase meeting effectiveness and efficiency, leading to 24% shorter meetings.

- Groups using visual language have experienced a 21% increase in reaching consensus compared to groups that did not use visuals.

- Combined visual and verbal communication increase credibility and influence rate to 43% compared to 17% when only verbal communication is used.

Step 4: Get Everyone on the Same Page and Aiming to Achieve Data Reliability

Without any doubt that company-wide data governance will be a proverbial game-changer, McKinsey recently wrote an article stressing the importance that “nearly all employees naturally and regularly leverage data to support their work.” This is a critical component of digital transformation: the company-wide integration of change, rather than siloed approaches, depending on the department or team in question.

According to a 2022 Edelman Trust Barometer, which Forbes cites as an annual global survey on trust in institutions, “two-thirds of people believe that authority figures (journalists, government leaders, and business executives) “flat-out lie.” In short, we have a post-Covid cynicism problem.

We must work on changing that, one work or team culture at a time. To do this, we must create a culture for believing and trusting data.

This means that all teams must get on board, from the top down or the bottom up. However it happens, as with all digital transformation, data reliability can only come from integrated, comprehensive, challenging of the norms of times past and embracing of what’s to come—and what the data is trying to say.

Step 5: Team Collaboration

For productive team collaboration to occur, team leaders must first break down the silos to create a common goal: data transparency and reliability. Moving forward, key roles should be created: data stewards and data quality analysts from every part of the organization. Furthermore, leaders should encourage good data practices to increase organizational trust in data gradually.

As Venture Beat stresses, concerning product teams: “To improve cross-functional collaboration, reorient your product team leadership to consist of a product manager, an engineering lead, and a design lead (a.k.a. “the trio”). Each should collaborate equally on decisions that ensure the technical, business, and user needs are considered as the product and its processes grow in maturity.”

According to John Cutler from Amplitude, “Analysis is a team sport.” This means that, ideally, teams work together toward data-related tasks and goals. Cutler stresses the importance of focusing on the usability and accessibility of the data, as well as adopting consistent labeling conventions. Also, he states that, ideally, analytics experts should operate less as a question/answer service and more as force multipliers, using data more for framing opportunities and promising options.

If you are hoping for a smooth transition but aren’t sure how to do it, consider enlisting LoadSpring to help you encourage everyone to be on board. Recruit the help of an expert.

LoadSpring provides the only analytics system designed for data transparency. From inside our signature project platform, LoadSpring Cloud Platform and ProjectINTEL work with you to identify the questions you want to answer and builds a data schema using various applications, so the data informs the answer. Move away from antidotal answers to a world based on empirical data with LoadSpring Cloud Platform.

When you’re ready, take your insights to the next level. LoadSpring Cloud Platform provides a clean data lake. From there, LoadSpring cloud experts and business analysts can help customize how your data is visually presented. ProjectINTEL will consolidate your data into one automated dashboard view. Then, since you’ll have access to your data, you can build custom visualizations of selected data points from wherever you can access the Internet and your team’s project platform.

If scanning the horizon for a new perspective of data reporting capabilities, look no further than LoadSpring: contact us today.